The Math That's Killing Your AI Agent: Why 85% Accuracy Means 80% Failure

Key Takeaways

85% accuracy per step = 20% success on a 10-step workflow. For every 100 customer requests, 80 fail. The demo works. Production doesn’t.

The fix isn’t better models — it’s better architecture. Break the chain with specialized multi-agent design, match retrieval strategies to the problem, and compress context between steps.

My take: Build observability before you build the agent. Design for human handoff, not full automation — 70-80% autonomous is a win. And remember: one failed task can destroy the trust you spent years building.

The Problem

In my past 18 months, I have observed the same pitch deck slide: “We’re deploying AI agents to automate our workflows.” And every one of them has the same blind spot — they evaluate agent accuracy on individual steps, not on end-to-end task completion.

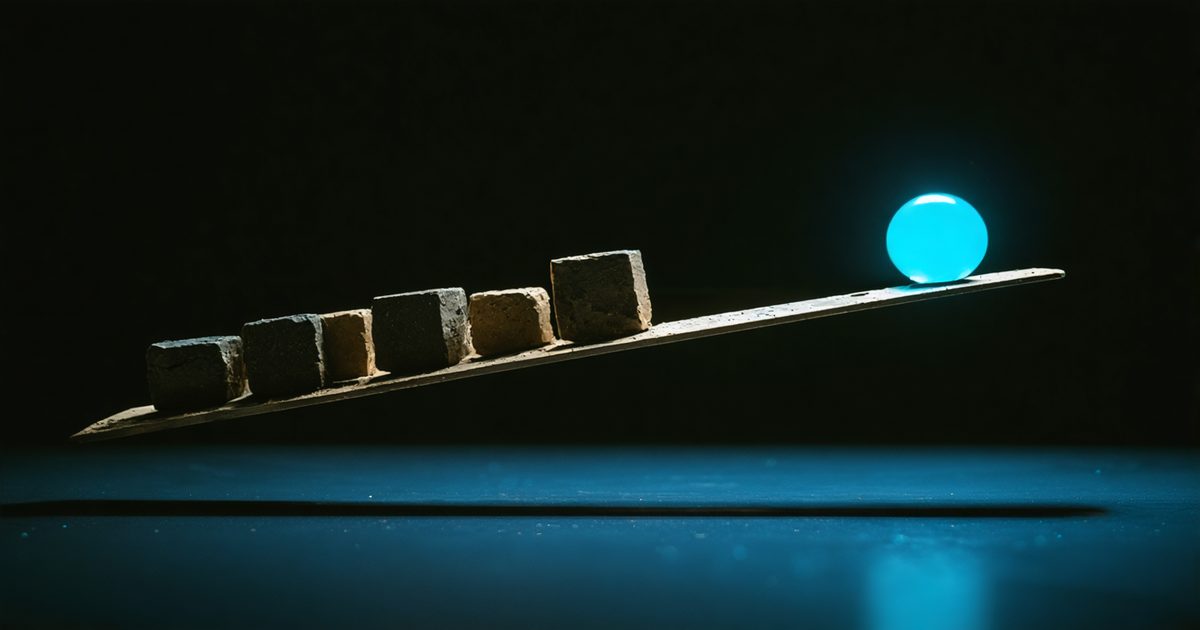

An agent that’s 85% accurate on each step sounds impressive. But chain 10 steps together, and you’re looking at a 20% success rate. That’s not a rounding error. That’s a system that fails 4 out of 5 times.

The Math

Think of an AI agent like a relay race with 10 runners. Each runner is 85% likely to pass the baton cleanly. Sounds good — until you realize the team only finishes the race if every single runner succeeds. Miss one handoff and the whole race is lost.

That’s what a “10-step workflow” means in practice. When a customer asks your agent “why is my bill higher this month?”, the agent doesn’t just answer — it runs through a chain of steps: understand the question, look up the account, pull billing history, compare to last month, check for plan changes, check for promotions, reason about the difference, draft a response, verify the response, and deliver it. Ten steps. Each one can fail.

Here’s what the math looks like when you chain steps together:

| Each step gets it right… | 3 steps | 5 steps | 10 steps |

|---|---|---|---|

| 95% of the time | 85.7% | 77.4% | 59.9% |

| 90% of the time | 72.9% | 59.0% | 34.9% |

| 85% of the time | 61.4% | 44.4% | 19.7% |

That last row is the one that matters. Your agent that “works great” in demos — 85% accurate on each step — succeeds only 20% of the time on a real 10-step workflow. For every 100 customer requests, 80 fail.

And that is very optimistic. In reality, when step 3 gets it wrong, it feeds bad data to step 4, which cascades through the rest. The real numbers are worse.

Three Failure Modes I’ve Seen Kill Production Agents

1. Retrieval Thrash

The agent queries a knowledge base, gets mediocre results, re-queries with slightly different phrasing, and loops — burning tokens without converging on useful context.

A financial services client deployed an agent for regulatory compliance questions. It would retrieve 5-8 document chunks per query (I see some configuration to get more than 10 document chunks, …yuck!), often containing related-but-wrong clauses. It re-retrieved 3-4 times per question, burning more than 10,000 tokens before producing a confidently wrong answer.

The fix: Not all retrieval problems are the same — and most teams use the same approach for everything. Simple keyword lookups, complex relationship queries, and multi-document reasoning each need different retrieval strategies. Score every retrieval with a relevance check. If it doesn’t meet the threshold after 2 attempts, escalate to a human — don’t loop. The right retrieval architecture for the problem is half the battle.

2. Tool Storms

The agent has access to multiple tools and starts calling them in rapid, uncoordinated bursts. Same API called 5 times with slightly different parameters. Redundant calculations. Data retrieved twice.

A telecom client’s billing inquiry agent had access to 6 APIs. For “why is my bill higher?” it may need to convert natural language to SQL for a database query, then look up the relevant PDF documents, and then cross-reference the charging and billing system… Good luck with that.

The fix: Separate planning from execution. Lightweight planner produces an explicit tool call plan before execution. Hard budget on calls and timeout.

3. Context Bloat

By step 7 or 8, the context window is stuffed with intermediate results, retrieved documents, and reasoning traces. The model loses track of the original intent.

A government document processing agent hit more than 10,000 tokens of accumulated context by step 6 of 9, and even more than that for non-english documents. It started confusing reference document fields with actual application fields.

The fix: Context compression every 2-3 steps. Summarize intermediate results, keep only what downstream steps need, drop raw chunks. Garbage collection for your agent’s memory.

What I’d Do Differently

Break the chain with multi-agent design. Instead of one agent running 10 steps, use specialized agents that each handle 2-3 steps well. A retrieval agent finds the right documents. A reasoning agent analyzes them. A response agent drafts the answer. Each agent is simpler, more testable, more scalable, and easier to monitor. And critically — you can verify the output between agents before passing it forward. A 3-step agent at 95% per-step gives you 85.7% end-to-end. Three of those chained with verification gates between them will outperform one agent trying to do everything.

Build observability before the agent. You cannot fix what you do not know. You need to see what’s happening at every step boundary and the AI thinking process. Most teams add observability as an afterthought. Flip it.

Design for human handoff, not full automation. The best enterprise agents I’ve seen handle 70-80% of cases autonomously and route the rest to humans with full context. That’s not a failure — that’s the design. Human-in-the-loop design. The agent that tries to handle 100% of cases is the one that ends up handling 0% reliably. And once it failed even one small task, we lost the trust that we built for years to the end users.

The Takeaway

The compound failure math is unforgiving. The fix isn’t better models — it’s better system design. Circuit breakers, observability, explicit contracts, hard budgets, and graceful degradation.

Gartner predicts more than 40% of agentic AI projects will be canceled by 2027. (Gartner, Jun 2025) The compound failure math is why. But it’s solvable — if you stop treating your agent as a magic box that will magically solve any problems and start treating it as an engineering system.

Read more: Gartner